Standardized assessments in times of COVID-19: Understanding short and long-term effects

28 Oct 2020 | Policy-makers, Professionals, School boards, School leaders, Teachers

In many countries worldwide, adjustments were made to standardized assessments following school closures to contain the spread of the coronavirus. As such assessments are commonly used to inform decisions on student progression and/or qualification, as well as to monitor and evaluate school and teacher quality, alternative measures were put into place in many contexts, including postponement of assessments, adaptations in administration, or reliance on prior assessment results in case of assessment cancellations. Recognizing the urgent need for research on the impact of different measures, the ICSEI Research Lab ‘COVID-19 and standardized assessments’ examines the short- and long-term consequences of adopted measures for students, teachers, schools and school systems. While the Lab will run until February 2021, a key finding so far is that systems that already have in place a combination of assessments that inform key decisions and qualification, including teacher assessment, seem to be more robust to deal with changes.

Marjolein Camphuijsen

28-10-2020

School closures and standardized assessments worldwide

On the second of April 2020, 84.8% of the total number of learners worldwide was affected by school closures following the outbreak of the coronavirus (UNESCO, 2020). In many contexts, this has implied that adjustments have been made or are considered to standardized, summative assessments (for an overview see Hares & Moscoviz, 2020). Whereas in a few countries, examinations went ahead as scheduled, in many other countries, examinations were cancelled, postponed or administered online. In case of cancellations, in some contexts, students have been allocated grades by using prior assessment results to predict student performance, or by relying on teachers’ judgement of previous coursework.

The Netherlands is an example of the latter case. On the 24th of March 2020, the Dutch Minister for Primary and Secondary Education and Media, Arie Slob, announced that central exams in the Netherlands would be cancelled. Students’ central exam scores normally contribute to 50% of the final grade on the school-leaving certificate that students obtain at the end of secondary education, whereas the other 50% is made up of results obtained during school exams. This year, however, the latter results fully determined the final grade. In primary education too, the high-stakes standardized test in the final year was cancelled, thereby taking away the opportunity for students to demand the school to reconsider their advice regarding the student’s transition from primary to secondary education, should she/he have scored higher at this final test than expected based on the school’s advice.

Short- and long-term effects of alternative assessment measures

The adoption of alternative strategies has led many educational researchers and stakeholders to wonder about the possible effects of the different measures, including with regards to the bias and fairness of alternative measures for particular groups of students, the legitimacy and public trust in high-stakes assessments, as well as student, school and system performance, and our understanding of this.

In the Netherlands, for example, the announced changes quickly gave rise to a debate on how the undertaken measures would affect different groups of students, and whether the assessment cancellations would enhance educational inequalities. Reason for concern is confirmed by recent research, which shows that early-tracking systems that do not rely on standardized tests are characterized by greater social inequality in educational achievement, compared to systems that do (Bol et al., 2014).

By relying on data from previous school years, it has been estimated that by cancelling the final standardized test at the end of primary education, 14,000 students run the risk of missing out on an adjusted school advice (CPB, 2020a). In a similar vein, for secondary education, it has been estimated that 8% of the student population would have obtained a different final result, had the central exams been cancelled during the 2018-2019 school year (CPB, 2020b). That is, around 7,000 students who failed to graduate in 2019, would have graduated if only the school exam had counted, whereas around 8,000 students who did graduate, would have failed under those circumstances.

While such estimates give us an indication of what the effects of this year’s cancellation might look like, they do not account for actions taken to reduce the impact of the cancellations. For example, in a letter issued on the 17th of April, the Dutch Minister of Education and Media called on primary and secondary schools to put in extra effort to ensure that students start their first year of secondary education ‘at a level that does justice to their capacities and possibilities’. In doing so, he emphasized giving students the benefit of the doubt. Moreover, at the level of secondary education, students who would not graduate on the basis of their school exam results, or those who wished to improve their grades, were allowed to take an additional test for a maximum of two to three subjects (depending on their school track) so to improve the final grade for those subjects.

Increased pass rates and potential score inflation

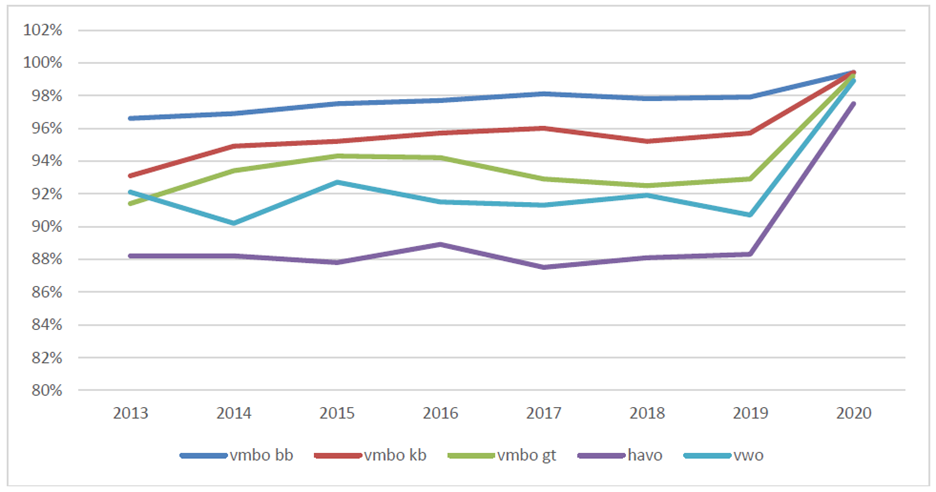

Recent evidence now suggests that, in various countries, pass rates have increased as a result of changes in exams. It has recently for example become clear that in 2020, the percentage of students in the Netherlands that graduated from secondary education has increased with 5.9% compared to the year before in all school tracks (see Figure 1; the lines represent the different tracks in secondary education).

Figure 1: Percentage of students that graduated from secondary education 2013-2020. Source: DUO, 2020

Currently, there still remains uncertainty how this increase can be explained (DUO, 2020).

The above highlights the need for empirical research on the effects of changes made to national, standardized assessments. That this need is urgent is confirmed by increasing public and professional unrest about how to proceed the coming year and concerns over score inflation and the legitimacy of public exams. Current health trends indicate that at least in some parts of the world, alternative measures are needed again.

ICSEI Research Lab: Standardized student assessment during and after the COVID-19 pandemic

With the aim of contributing to a better understanding of the impact of changes made to assessments, The International Congress for School Effectiveness and Improvement, through the Crisis Response in Education Network, has invited practitioners and researchers from around the globe to be part of a research lab on ‘Standardized student assessment during and after the COVID-19 pandemic’. This collaborative research initiative explores the different changes made worldwide in standardized assessments following the outbreak of the coronavirus, and examines the short- and long-term consequences of these changes for students, teachers, schools and school systems. Moreover, by drawing on and conducting research in different contexts, the Research Lab reflects on relevant means to mitigate some of the unintended consequences of these changes, and ways to improve standardized assessment in the future.

Stakeholder involvement in assessment to improve the robustness of public exams

Whereas the Lab will run until February 2021, initial discussions highlighted the importance of stakeholder involvement in the design of/decisions about assessment systems, especially in times of crisis. This enables both the pooling of knowledge and expertise, and at the same time increases ownership of the proposed solutions. As argued by a panel of stakeholders who participated in a review on the measures adopted in Scotland in the aftermath of exam cancellations, ‘because COVID-19 is an unprecedented threat, normal processes are inadequate to deal with this. (…). No single organisation can solve this issue’ (Priestley et al., 2020, p.46, emphasis added).

There might simultaneously be significant benefits to involving teachers more actively in assessment decisions even in ‘ordinary’ times. In many countries, the changes in assessment during school closures resulted in greater reliance on educators’ assessment skills, but also indicated that these skills were sometimes lacking. It has become clear that systems that already have in place a combination of assessments that inform key decisions on progression or qualification, including teacher assessment, are more robust to deal with forced changes. In these systems, teachers have long been involved in the development of standardized assessment (e.g. through being part of panels that develop and validate assessment), as well as trained in school to moderate assessment, which was found to mitigate some of the potential negative effects of assessment cancellations, such as loss of knowledge of learning deficiencies.

Now more than ever, education professions as well as authorities need reliable assessment data to evaluate the impact of school closures and distance learning on students’ level of knowledge and skills. Involving educators more actively in decisions on assessment, as well as investing in the enhancement of assessment literacy among education professionals, seems like a fruitful path to respond to current, as well as future, needs.

Bol, T., Witschge, J., Van de Werfhorst, H. G., and Dronkers, J. (2014). Curricular Tracking and Central Examinations: Counterbalancing the Impact of Social Background on Student Achievement in 36 Countries. Social Forces, 92(4), 1545–1572.

CPB. (2020a). Schrappen eindtoets groep 8 kan ongelijkheid vergroten. https://www.cpb.nl/schrappen-eindtoets-groep-8-kan-ongelijkheid-vergroten

CPB. (2020b). Effect schrappen centraal examen zonder aanvullende maatregelen. https://www.cpb.nl/effect-schrappen-centraal-examen-zonder-aanvullende-maatregelen

DUO. (2020). Examenresultaten VO 2020. https://www.rijksoverheid.nl/binaries/rijksoverheid/documenten/kamerstukken/2020/10/13/rapportage-duo-examenresultaten-2020/rapportage-duo-examenresultaten-2020.pdf

Priestley, M., Shapira, M., Priestley, A., Ritchie, M., and Barnett, C. (2020). Rapid Review of National Qualifications Experience 2020. https://www.gov.scot/groups/rapid-review-of-national-qualifications-experience-2020/

See also information for:

Most recent blogs:

How LEARN! supports primary and secondary schools in mapping social-emotional functioning and well-being for the school scan of the National Education Program

Jun 28, 2021

Extra support, catch-up programmes, learning delays, these have now become common terms in...

Conference ‘Increasing educational opportunities in the wake of Covid-19’

Jun 21, 2021

Covid-19 has an enormous impact on education. This has led to an increased interest in how recent...

Educational opportunities in the wake of COVID-19: webinars now available on Youtube

Jun 17, 2021

On the 9th of June LEARN! and Educationlab organized an online conference about...

Homeschooling during the COVID-19 pandemic: Parental experiences, risk and resilience

Apr 1, 2021

Lockdown measures and school closures due to the COVID-19 pandemic meant that families with...

Catch-up and support programmes in primary and secondary education

Mar 1, 2021

The Ministry of Education, Culture and Science (OCW) provides funding in three application rounds...

Home education with adaptive practice software: gains instead of losses?

Jan 26, 2021

As schools all over Europe remain shuttered for the second time this winter because of the Covid...